tl;dr

For several years I managed the 3rd line site reliability operation for many of the world’s busiest gambling sites, working for a little-known company that built and ran the core backend online software for several businesses that each at peak could take tens of millions of pounds in revenue per hour. I left a couple of years ago, so it’s a good time to reflect on what I learned in the process.

In many ways, what we did was similar to what’s now called an SRE function (I’m going to call us SREs, but the acronym didn’t exist at the time). We were on call, had to respond to incidents, made recommendations for re-engineering, provided robust feedback to developers and customer teams, managed escalations and emergency situations, ran monitoring systems, and so on.

The team I joined was around 5 engineers (all former developers and technical leaders), which grew to around 50 of more mixed experience across multiple locations by the time I left.

I’m going to focus here on process and documentation, since I don’t think they’re talked about usefully enough where I do read about them.

If you want to read something far longer Google’s SRE book is a great resource.

Process

Process is essential to running and scaling an SRE operation. It’s the core of everything we achieved. When I joined the team, habits were bad – there was a ticketing system, but one-journal resolutions were not uncommon (‘Site down. Fixed, closing.’).

An SRE operation is basically a factory processing information and should act accordingly. You wouldn’t have a factory running without processes to take care of the movement of goods, and by the same token you shouldn’t have a knowledge-intensive SRE operation running without processes to take care of the movement of knowledge.

One frequent objection to process I heard is that it ‘stifles creativity’. In fact, effective process (bad process implemented poorly can mess anything up!) clears your mind to allow creative thought.

A great book on this subject is ‘The Checklist Manifesto’, which inspired many of the changes we made, and was widely read within the team. It cites the examples of the aviation industry’s approach to process, which enables remarkable creativity under stressful conditions by mental automation of routine operations. There’s even a film about one incident discussed and the pilot himself cited checklists and routine as an enabler of his fast-thinking creativity and control in that stressful situation. In fact, we used a similar process ourselves: in emergency situations, an experienced engineer would dive into finding a solution, while a more junior one would follow the checklist.

Another critique of process is that process can inhibit effective working and collaboration. It absolutely can if process is treated as an entity justified by its own existence rather than another living asset. The only thing that can guard against this is culture. More on that later.

Process – Tooling

The first thing to get right is the ticketing system. Like monitoring solutions, people obsess over which ticketing system is best. And they are wrong to. The ticketing system you use you will generally end up preferring simply due to familiarity. The ticketing system is only bad if it drives or encourages bad processes. What a bad process is depends on the constraints of your business.

It’s far more important to have a ticketing system that functions reliably and supports your processes than the other way round.

Here’s an example. We moved from RT to JIRA during my tenure. JIRA offered many advantages over RT, and I would generally recommend JIRA as a collaborative tool. The biggest problem we had switching, however, was the loss of some functionality we’d built into RT, which was critical to us. RT allowed us to get real-time updates on tickets, which meant that collaboration on incidents was somewhere between chat and ticketing. This record was invaluable in post-incident review. RT also allowed us to hide entries from customers, which again was really hard to lose. We got over it, but these things were surprisingly important because they’d become embedded in our process and culture.

When choosing or changing your ticketing system, think about what’s really important to operations, not specific features that seem nice when on a list. What’s important to you can vary from how nice it looks (seriously – your customers might take you more seriously, and your brand might be about good design), to whether the reporting tools are powerful.

Documentation

After process, documentation is the most important thing, and the two are intimately related.

There’s a book in documentation, because, again, people focus on the wrong things. The critical thing to understand is that documentation is an asset like any other. Like any business assets, documentation:

- If properly looked after, will return investment many times over

- Requires investment to maintain (like the fabric of a factory)

- If out of date, costs money simply because it’s there (like out-of-date inventory)

- If of poor quality, or not usable is a liability, not an asset

But this is not controversial – few people disagree with the idea that good documentation is useful. The point is: what do you do about it?

Documentation – Where We Were

We were in a situation where documentation provided to us was not useful (eg from devs: ‘a network partition is not covered here as it is highly unlikely’. Well, guess what happened! And that was documentation they kindly bothered to write…), or we simply relied on previously-journalled investigations (by this time we were writing things down) to figure out what to do next time something similar happened.

This was frustrating all of us, and we spent a long time complaining about the documentation fairy not visiting us before we took responsibility for it ourselves.

Documentation – What I Did

Here’s what I did.

- I took two years’ worth of priority incidents (ie those that triggered – or would have triggered – an out of hours call), and listed them. There were over 1700 of them.

- Then I categorised them by type of issue.

- Then I went through each type of issue and summarised the steps needed to either resolve, or get to a point where escalation was required

This took seven months of my full-time attention. I was a senior employee and I was costing my company lots of money to sit there and write. And because I had a clueful boss, I never got questioned about whether this was a good use of time. I was trusted (culture, again!). I would say it took four months before any dividends at all were seen from this effort. I remember this four-month period as a nerve-wracking time, as my attention was taken away from operations to what could have been a complete waste of my time and my employer’s money and an embarrassing failure.

Why not give it to an underling to do? For a few reasons. This was so important, and we had not done it before so I needed to know it was being done properly. I knew exactly what was needed, so I knew I could write it in such a way that it would be useful to me at the very least. I was also a relatively experienced writer (arts grad, former journalist), so I liked to think that that would help me write well.

We called these ‘Incident Models’ as per ITIL, but they can also be called ‘run books’, ‘crib sheets’, whatever. It doesn’t matter. What mattered was:

- They were easy to find/search for

- It was easy to identify whether you got a match

- They were not duplicated

- They could be trusted

We put this documentation in plain text within the ticketing system, under a separate JIRA project.

The documentation team got wind of what we were up to and tried to pressure us to use an internal wiki for this. We flat-out refused, and that was critical: the documentation system’s colocation with the ticketing system meant that searching and updating the documentation had no impedance mismatch. Because it was plain text it was fast, simple to update, and uncluttered. We resisted process that jeopardised the utility of what we were doing.

If you want to learn more about bash, read my book Learn Bash the Hard Way, available at $5:

Documentation and the Criticality of De-cluttering

When we started, we designed a schema for these Incident Models which was a thing of beauty, covering every scenario and situation that could crop up.

In the end it was almost a complete waste of time. What we ended up using was a really dumb structure of:

- Statement of problem

- Steps 1-n of what to do

- Further/deeper discussion, related articles

That was it. Attempts to structure it more thoroughly all failed as it was either confusing to newcomers, created too much administrative overhead, or didn’t cover enough. Some articles developed their own schema over time that was appropriate to the task, and new categories (eg the ‘jump-off’ article that told you which article to go to next) evolved over time. We couldn’t design for these things in advance because we didn’t know what would work or what would not.

Call it ‘agile documentation’ if you want – agile’s what sells these days (it was ITIL back then). Again, what was critical was that simplicity and utility trumped everything else.

There Is No Documentation Fairy

Having spent all this time and effort a couple of other things became clear regarding documentation.

First, we gave up accepting documentation from other teams. If they commented code, great, if there was something useful on the wiki for us to find, also great. But when it came to handing over projects we stopped ‘asking for documentation’. Instead we’d arrange sessions with experienced SREs where the design of the project would be discussed.

Invariably (assuming they had no ops experience), the developer would focus on the things they’d built and how it worked – and these things were often the most thoroughly tested and least likely to fail.

By contrast, the SRE would focus on the weak points, the things that would go wrong. ‘What happens if the network gets partitioned? What if the database runs out of disk? Can we work out from the logs why the user didn’t get paid?’

We’d then go away and write our own documentation and get the engineer to sign off on it – the reverse of the traditional flow! They’d often make useful comments and give us added insights in the process.

The second thing we noticed was that our engineers were still reluctant to update the docs that only they were using. There was still a sense that documentation should be given to them. The leadership had to constantly reinforce that this was their documentation, not tablets of stone handed down from on high, and if they didn’t constantly maintain this, they would become useless.

This was a cultural problem and took a long time to undo. Undoing it also required the documentation changes to be reinforced by process.

In the end, I’d say about 10% of the ongoing working time was spent maintaining and writing documentation. After the initial 7-month burst, most of that 10% was spent on maintenance rather than producing new material.

If you want to learn more about git, read my book Learn Git the Hard Way, available at $8:

Documentation – Benefits

After getting all this documentation done, we experienced benefits far in excess of the 10% ongoing cost. To call out a few:

- Easier onboarding

Before this process started we were reluctant to take on less experienced staff. After, onboarding became a breeze. Among other things the training involved following incidents as they happened and shadowing more experienced staff. New staff were tasked with helping maintain docs, which helped them understand what gaps they had in their knowledge.

- Better training

The docs gave us a resource that allowed us to identify training requirements. This ended up being a curriculum of tools and techniques that any engineer could aim to get a working knowledge of.

- Less stress through simpler escalation

These was a big one. Before we had the step-by-step incident models, when to escalate was a stressful decision. Some engineers had a reputation for escalating early, and all were insecure about whether they’d ‘missed something obvious’ before calling a responsible tech lead out of hours. SREs would also get called out for not escalating early enough as well!

The incident models removed that problem. Pretty soon, the first question an escalated-to techie asked was ‘have you followed the incident model’? If so, and there something obvious was missed, then gaps in it became clear and quickly-fixed. Soon, non-SREs were busy updating and maintaining the docs themselves for when they were escalated-to. It became a virtuous circle.

- Better discipline

The obvious value of documentation to the team helped improve discipline in other respects. Interestingly, SREs previously had the reputation for being the ‘loudest’ team – there was often a lot of ‘lively’ debate, and the team was very social – which made sense, as we relied on each other as a team to cover a large technical area, dealt with often non-technical customer execs, and sharing knowledge and culture was critical.

As time progressed, the team became quieter and quieter – partly due to the advent of chatrooms, increased remote working, and international teams, but also due to the fact that so much of the work became routine: follow the incident model, when you’re done, or don’t understand something, escalate to someone more senior.

- Automation

Automating the investigations this way meant that the way was clear to further automate them with software.

Having metrics on which tickets were linked to which incident models meant that we knew where best to focus our effort. We wrote scripts to comb through log files in the background, make encoding issues quicker and simpler to figure out, automate responses to customers (‘Issue was caused by a change made by app admin user XXX’), and a lot more.

These automations inspired an automation tool we built for ourselves based on pexpect: http://ianmiell.github.io/shutit/ But that’s another story. Basically, once we got going it was a virtuous circle of continuous improvement.

Back to Process

Given you have all these assets, how do you prevent them from degrading in value over time? This is where process is critical.

Two processes were critical in ensuring everything continued smoothly: triage and post-incident review.

Process – Triage

5%-10% of time was spent on the triage process. Again, it took a long time to get the process right, but it resulted in massive savings:

- Reduce the steps to the minimum useful steps

It’s so tempting to put as much as possible into your triage process, but it’s vital to keep the value in the process over completeness. Any step that is not often useful tends to get skipped over and ignored by the triager.

- Focus on saving cost in the process

Looking for duplicates, finding the relevant incident model, reverting quickly to the customer, and escalating early all reduced the cost per ticket significantly. It also saved other engineers the context switch of being asked a question while they’re thinking about something else. It’s hard to evaluate the benefits of these items, but we were able to deal with increased volumes of incidents with fewer people and less difficulty. Senior management and customers noticed.

Recording the details of these efforts also saved time, as (for example) engineers given a triaged ticket could see that the triager searched for previous incidents with a string that maybe they could improve on. It also meant that more experienced staff could review the triage quality.

- Review triage

Experienced staff need to review the triage process regularly to ensure it’s actually being applied effectively.

When I moved to another operations team (in a domain I knew far less about), I cut the incident queue in half in about 3 days, just by applying these techniques properly. The triage process was there, but it wasn’t being followed with any thought or oversight, and was given to a junior member of staff who was not the most capable. Big mistake. Triage must be done – or overseen – by someone with a lot of experience, as while it looks routine and mechanical it involves a lot of significant decisions that rest on experience in the field.

And yes, I was the new boss, and I chose to spend my first week doing the ‘lowly’ task of triage. That’s how important I thought it was.

- Rota the task

No-one wants to do triage for long, so we rota’d it per week. This allowed some continuity and consistency, but stopped engineers from going crazy by spending too long doing the same task over and over.

Process – Post-Incident Review

The mirror image of triage was the ‘post incident review’. Every ticket was reviewed by an experienced team member. Again, this was a process that took up about 5% of effort, but was also significant.

A standard form was filled out and any recommendations were added to a list of backlog ‘improvement’ tasks which could be prioritised. This gave us a number for technical/process debt that we wanted to look at.

Culture

I’ve mentioned culture a few times, and it’s what you always return to if you’re trying to enact any kind of change at all, since culture is at root a set of conceptual frameworks that underlie all our actions.

I’ve also mentioned that people often focus on the ‘wrong thing’. Time and again I hear people focus on tools and technology rather than culture. Yes, tools and technology are important, but if you’re not using them effectively then they are worse than useless. You can have the best golf clubs in the world, but if you don’t know how to swing and you’re playing baseball then they won’t help much.

Culture requires investment far more than technology does (I invested over half a year just writing documentation, remember). If the culture is right, people will look for the right tools and technology when they need to.

When given a choice about what to spend time and money on, always go for culture first. It cost me a lot of budget, but forcibly removing an ‘unhelpful’ team member was the best thing I did when I took over another team. The rest of the team flowered once he left, no longer stifled by his aggressive behaviour, and many things got done that didn’t before.

We also built a highly effective team with a budget so small that recruiters would phone me up to yell at me what I was looking for was ‘impossible’, but by focussing on the right behaviours, investing time in the people we found, and having good processes in place, we got an extremely effective and loyal team that all went on to bigger and better things within and outside the company (but mostly within!).

Politics

A quick word on politics. You’ve got to pick your battles. You’re unlikely to get the resources you need, so drop the stuff that wont get done to the floor.

Yes, you need a monitoring solution, better documentation, better trained staff, more testing… you are not going to get all these things unless you have a money machine, so pick the most important and try and solve that first. If you try and improve all these things at once, you will likely fail.

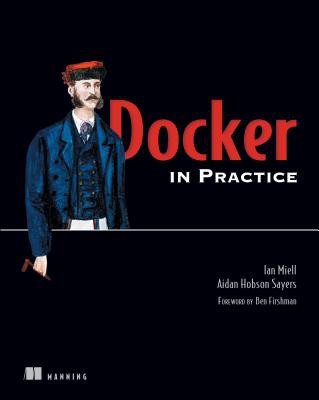

After process, and documentation, I tried to crack the ‘reproducible environment’ puzzle. That led me to Docker, and a complete change of career. I talk about these things a little here and here.

Any Questions?

Reach me on twitter: @ianmiell

Or LinkedIn

My book Docker in Practice:

Get 39% off with the code: 39miell2

If you liked this post, you might also like:

Five Things I Did to Change a Team’s Culture

Ten Things I Wish I’d Known About bash

My 20-Year Experience of Software Development Methodologies

Five Books I Advise Every DevOps Engineer to Read

Thanks for sharing this with us.

Recently, I’ve been working more than never in servers(AWS) and came to realize that having a defined structure when deploying helps me automating the whole process even more. Just as you did back there, I’ve been taking some time to document what I’ve done(and doing) so that later on it benefits myself and my fellow coworkers.

Having a defined process and a clear documentation, in my opinion, really helps to get a reproducible environment.

First time I read from you. I’m eager to read more.

Appreciate documenting your experience and see a lot of similarities with past job where we made the same transition with very similar problems.

I’d be interested more in how you built out documentation within JIRA itself rather than putting in Confluence.

One thing I’ll recommend for SRE teams is capturing performance metrics from applications, services, and systems through graphite/influxdb/promethus/etc so 1) your SRE/NOC team can team/silo specific dashboards to see what’s going on easily and 2) your monitoring system can build alerts against the same data for notifications. We ended up embedding these dashboard within Confluence runbooks/playbooks followed by diagnosing/triaging, resolving, and escalation information. We also ended up associating these runbooks/playbooks with the alerts and had the links outputted into the operational chat along with the alert in question so people could easily follow it back.

Definitely want to encourage others for automating as much of recovering from these alerts as possible but also the other operational processes within your organization. It’s embarrassing to tell management one of your team members caused an outage because they failed to copy and paste a command from the runbook in the proper order. Whether you use Ansible, Puppet, Chef, Salt stack, etc, invest in an automation framework.

Lastly, I definitely recommend checking out and watching BigPanda’s Monitoringscape (https://bigpanda.io/monitoringscape/) page as they track new and mature technologies most SRE teams leverage.

Hi Ian ,

I am the editor of InfoQ China which focuses on software development. We like this article and wish to translate it.

Before we translate it into Chinese and publish it on our website, I want to ask for your permission first! This translation version is provided for informational purposes only, and does not make any commercial use.

In exchange, we will put the English title and link at the beginning of Chinese article. If our readers want to read more about this, they can click back to your website.

Thanks a lot, hope to get your help. Any more question, please let me know.

Sure, please go ahead.

Thanks for that very interesting article!

I was particularly interested to read your part about “Culture”, but you are not really specific there, except that you should invest preferably in this part than in others.

Could you give more details about what “Culture” is for you (or was, in the position you are describing here) and what would it mean to invest into culture.

Thanks!

Good question. Started a response, but I’m wondering whether it’s a separate article…

I certainly wouldn’t mind a response as good as this article in its own dedicated article 😀

Also would love to hear more on this. I get a lot of pushback when talking about the importance of culture – a success story is always worth sharing. 🙂 Thanks so much for taking the time to write out your thoughts.

Do you have an example format for your KB/RunBook entries?